#09. VoiceOver: using an iPhone without seeing a screen.

A quick introduction to VoiceOver and what to consider when designing for its users.

“How can blind a blind person use an iPhone?”, asked a seasoned product designer. It was not a thought that would cross their mind, but when challenged with it, they could not imagine a solution. How can someone interact with a touchscreen without seeing it?

In this issue of “The Accessibility Apprentice”, we will explore how VoiceOver, Apple’s gesture–based screen reader, works, and what designers should consider when delivering accessible products for iOS.

This is “The Accessibility Apprentice”, a free bi-weekly accessibility newsletter. If you want to receive more insights, teardowns, and accessibility tips in your email, consider subscribing.

What is VoiceOver?

VoiceOver is a screen reader that “gives audible descriptions of what’s on your screen” built into Apple’s various OS. Its Android counterpart is called Talkback. There are some key differences between the two, which won’t be covered in this issue.

In the age of flat touch screens and direct interaction (“tap the target to interact with it”), VoiceOver turns the phone screen into a control panel of sorts. In a way, it resembles the way phones used to work in the golden era of mobile design.

With a physical keyboard under the screen, users would tap “left” and “right” to focus on targets, tap the action button to execute, or “home” to return to the desktop. “Focus” state (a bright ring around the element) was prominent and apparent. Focus order was obvious and clear, so clicking “down” wouldn’t suddenly bring you to the top of the page.

In the good old days, however, accessibility was not really a thing. For instance, Nokia only developed and released a free Nokia Screen Reader (NSR) in 2011, a year before Symbian OS was discontinued. NSR may have been a great product, but interestingly enough, the top comment under the review of NSR reads:

With regards to visually impaired usage, I really do believe that Apple lead the way here, they simply provide an absolute wealth of *built in* options that are simply unrivalled on any other platform.

Apple was indeed ahead of the competitors: VoiceOver has been a part of macOS (since 2005) and was introduced to iPod Shuffle and Nano, iPhone 3GS, and iPad much earlier than the NSR release. Feature phones, however, still offer a good analogy for how screen readers work: by turning a screen into a gesture-based keyboard, and using voice to communicate information.

Start using VoiceOver in 3 steps

Turn it on

Since VoiceOver is already built into your device, you don’t need to download or install anything. Simply navigate to “Settings” –> “Accessibility” –> “VoiceOver”, and you will find it.

There is a good short educational video from Apple teaching you the basics of VoiceOver navigation.

Once you toggle VoiceOver on, it’ll be a good time to practice the key gestures.

Basic gestures

With VoiceOver on, every element that you touch or move your finger to will be placed in focus, and your phone will read it aloud.

A detailed VoiceOver guide by Orange showcases some common gestures you may wish to practice.

Drag

Imagine that your finger is a mouse cursor. Drag it across the screen to hear what’s underneath. Close your eyes and let your iPhone be your guide.

Double tap anywhere

If you want to click something that is in focus, double tap the screen anywhere. It will activate the last item vocalised.

Swipe left or right with one finger

This will move the focus to a previous (left) or the next (right) element on the screen. Pretty much like tapping the arrow keys.

Swipe left or right with three fingers

Use three fingers to change pages and screens (essentially, a horizontal swipe).

Slowly swipe up from the bottom until you feel a light vibration

This is tough, but if you’re not a happy owner of an older model of an iPhone, you will have to use this gesture to return to Home screen. Use the same gesture as you would normally, but move your finger slowly and gently, and release as soon as you feel the first tick.

Practice

Practice makes perfect. Try using your favourite apps, and find how flawed or completely broken the experience is — and remember that thousands of people deal with it every day.

If you are too terrified that of breaking things, launch a sandbox to practice VoiceOver and try the key gestures in a safe environment.

Design for screen readers

Knowing how screen readers work and the pains users encounter daily, use this knowledge to improve your design practice and products.

A product designer’s scope of work in the accessibility arena is vast: from establishing basic interaction standards to collecting users’ feedback and conducting audits.

In the previous issues of “The Accessibility Apprentice”, we covered:

Facilitating Usability Testing sessions with blind users (#01);

Getting started with Accessibility at your organisation (#04).

Designing for screen readers is a tough craft, but ensuring that everyone can access their product is literally the designer’s job.

Ensure that all headings on each page represent the hierarchy of information: from a large title to section titles. Imagine them as an Index list, and make sure your engineers implement them accordingly.

Add alt text to all images, except decorative. If your image conveys information of any kind, provide a text description detailed enough for a screen reader user to understand what is in the picture.

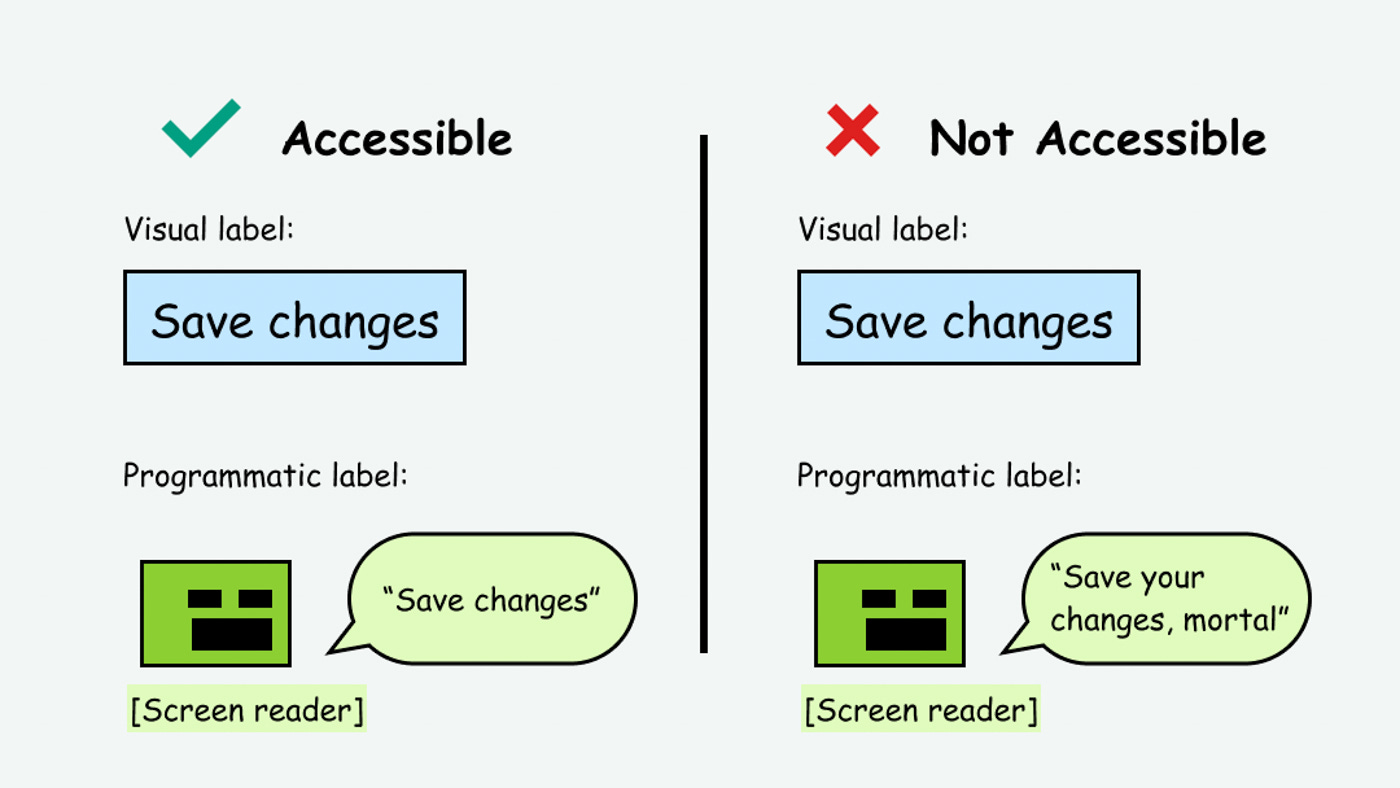

Make sure screen readers read labels correctly, and provide alternative labels when required. For instance, a clickable icon will surely need an alternative screen-reader label, whereas a regular button should do without it.

P.S.

Visual impairments have various grades. Some people with limited sight only use VoiceOver in certain situations (i.e. poor lighting conditions). Others rely solely on it for all digital interactions.

Strive to test your accessible product with a diverse pool of participants to ensure everyone can enjoy unrestricted access to your product.

VoiceOver is a powerful tool that does much more than any one article can cover. In the following issues, we will explore the best practices of designing for screen readers.

Stay tuned!